It is a shocking reality in 2026 that ai nudify apps are still being offered on google play store and the Apple App Store, despite mounting public outrage and regulatory scrutiny. Artificial intelligence has undeniably revolutionized our world with incredibly useful applications. However, the dark side of this technology is becoming impossible to ignore.

One of the most concerning areas of abuse involves AI “deepfakes.” At the start of this year, a disturbing trend was highlighted by digital watchdogs. Malicious applications designed specifically to digitally strip individuals without their consent have become rampant on major smartphone platforms.

A comprehensive investigation by the Tech Transparency Project (TTP) has blown the lid off this growing crisis. Their findings claim that despite previous warnings, little to nothing has fundamentally changed regarding App Store and Google Play moderation protocols.

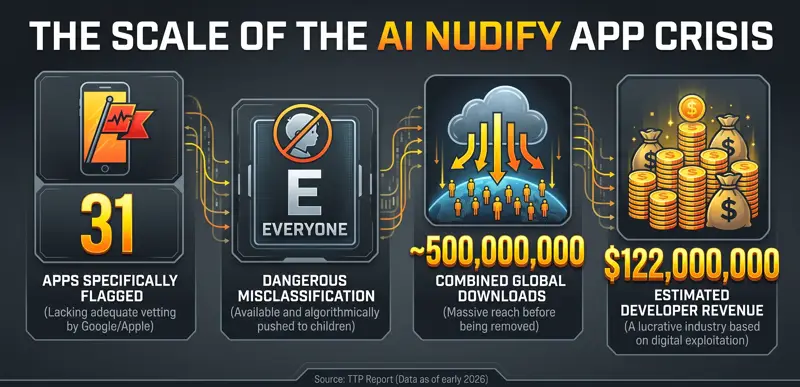

According to the Tech Transparency Project report, both digital storefronts continue to host a significant number of these controversial applications. Even more alarming is how these apps are categorized. Many of these highly inappropriate tools have bypassed safety filters and are actively marketed with an “E” for Everyone age rating.

This gross misclassification means that children can easily download, use, and be targeted by these malicious programs. The implications for child safety, cyberbullying, and digital privacy are catastrophic.

The Mechanics of AI Deepfake Image Editors

To understand the threat, we must understand how these tools operate. So-called “nudify” apps are, at their core, sophisticated AI deepfake image editors. They leverage advanced machine learning algorithms to alter existing photographs.

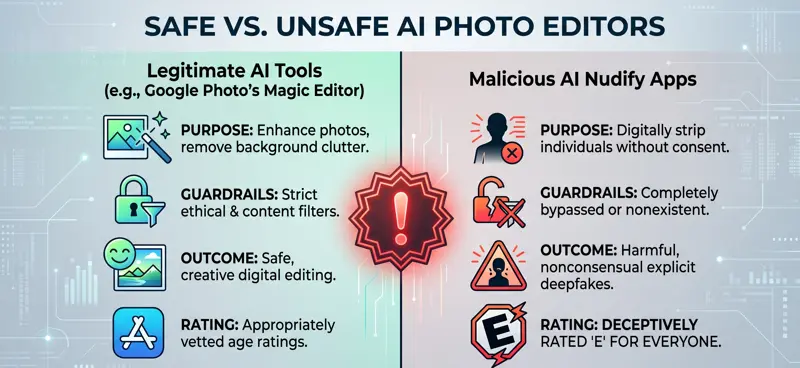

On the surface, the underlying technology is similar to mainstream, harmless tools like Google Photo’s Magic Editor. Both use generative AI to edit images, remove unwanted elements, and synthesize new, realistic details to fill the gaps.

However, there is a massive and dangerous distinction. Legitimate photo editing software includes strict, hard-coded safety features. These guardrails prevent the AI from generating illicit, violent, or nonconsensual sexual imagery.

The malicious nudify apps completely lack these essential ethical guardrails. They are explicitly designed and marketed for one sinister purpose. Generative AI gives these unregulated tools the ability to digitally strip subjects in standard photographs.

This allows bad actors to generate highly realistic, pornographic deepfakes from innocent pictures of literally anyone—including minors, classmates, colleagues, and celebrities.

| Feature | Legitimate AI Editors (e.g., Magic Editor) | AI Nudify Apps |

|---|---|---|

| Primary Purpose | Enhancing photos, removing background clutter | Generating nonconsensual explicit imagery |

| Safety Guardrails | Strictly enforced content filters | Completely bypassed or non-existent |

| App Store Rating | Appropriate age ratings enforced | Often falsely rated “E” for Everyone |

The Staggering Scale of the Problem

The existence of these apps is bad enough, but the sheer volume of their distribution is what makes this a full-blown crisis. The TTP’s report specifically identified 31 of these apps that had not been adequately vetted by platform moderators.

Because they carried an “E” rating, these apps were not just available; they were sometimes algorithmically pushed to younger demographics. This opens children up to severe avenues for harm, both as potential victims of deepfake bullying and as users exposed to inappropriate digital environments.

The weaponization of generative AI in mainstream app stores represents one of the most profound failures of digital moderation in the modern tech era.

The full report provides a long, terrifying rundown of the capabilities of these specific applications. It details how easily they can be manipulated to cause irreparable reputational and psychological damage to innocent victims.

Furthermore, it highlights a dark reality: this is an incredibly lucrative business model for rogue developers. According to the data gathered by researchers, the ecosystem surrounding these tools is booming financially.

The apps examined in the TTP’s report had generated an estimated $122 million in collective revenue. Even more shocking, these malicious programs had been downloaded almost 500 million times globally before being flagged.

App Store and Google Play Moderation: A Reactive Failure

When confronted with the staggering evidence compiled by the TTP, the tech giants were forced to respond. Google officially stated that many of the applications mentioned in the report had subsequently been removed from the Play Store.

Apple also quietly purged a number of the flagged apps from its ecosystem, although the company notoriously refused to provide an official public comment on the matter at the time.

While the removal of these specific 31 apps is a positive step, it highlights a fundamental flaw in generative AI app safety. The moderation approach relies almost entirely on reactive measures. Apps are only removed after they have been flagged by third-party watchdogs or after the damage has already been done.

I have no doubt that Apple and Google move swiftly to delete these apps the moment they are made aware of them. However, their internal automated vetting processes are clearly failing to detect them during the initial submission phase.

| Metric Revealed by TTP | Data Point |

|---|---|

| Specific Apps Flagged with “E” Rating | 31 distinct applications |

| Estimated Combined Downloads | Nearly 500,000,000 |

| Estimated Developer Revenue | $122 Million USD |

The Push for Nonconsensual Deepfake Regulations

The problems caused by these AI deepfake image editors are obvious, clear, and devastating. They are destroying lives, facilitating severe cyberbullying in schools, and creating new avenues for digital harassment.

Because tech platforms are struggling to self-regulate, governments are finally stepping in. There have already been aggressive moves made to ban these applications at the legislative level where possible.

For instance, the UK government has recently launched a dedicated attempt to ban the creation and distribution of these deepfakes for good. This is a crucial step forward, considering the real-world damage these apps are inflicting in educational institutions and workplaces.

In the United States, lawmakers are also scrambling to draft comprehensive nonconsensual deepfake regulations. The goal is to hold not just the users, but the developers and the hosting platforms legally accountable for the proliferation of this abusive technology.

Until platforms implement proactive AI scanning before apps are published, the “Whack-A-Mole” moderation strategy will continue to leave millions vulnerable.

The propensity of these apps and their apparent financial value mean that bad actors will constantly try to sneak them past App Store guidelines. They often disguise them as innocent photo enhancers or avatar creators.

A massive paradigm shift is required in how applications of this sort are rated, vetted, and dealt with. For more detailed insights into digital accountability, you can read the official findings directly from the Tech Transparency Project.

If generative AI continues to exist in this largely unrestricted form on mobile platforms, apps of this nature will only become more sophisticated and common. The tech industry must prioritize human safety over app store revenue immediately.

| Moderation Approach | Current Flaws | Required Solution |

|---|---|---|

| Reactive Takedowns | Damage is already done before removal | Proactive code analysis prior to publishing |

| Age Rating Systems | Developers lie to secure “E” for Everyone | Mandatory AI capability disclosures |

| Platform Liability | Stores face little legal consequence | Strict nonconsensual deepfake regulations |

Frequently Asked Questions

What exactly are AI nudify apps?

They are malicious smartphone applications that use generative artificial intelligence to digitally remove clothing from standard photographs of people, creating realistic but completely fabricated explicit deepfake images without the subject’s consent.

Why are these apps considered dangerous for children?

Beyond the obvious privacy violations, a major issue highlighted by the TTP report is that many of these apps bypassed moderation to secure an “E” (Everyone) rating. This made them easily accessible to minors, leading to severe cases of cyberbullying in schools.

How did these apps bypass App Store and Google Play moderation?

Developers often disguise these malicious tools as standard photo editors, avatar generators, or beauty filters during the submission process. Once approved, the backend AI capabilities are utilized for illicit purposes, bypassing the initial automated security scans.

What were the main findings of the Tech Transparency Project report?

The report revealed that at least 31 unregulated nudify apps were available on major app stores, many rated for children. It also exposed that these apps had generated roughly $122 million in revenue and amassed nearly 500 million downloads.

Are Google and Apple doing anything to stop this?

Both companies removed the specific apps flagged by the TTP report once they were notified. However, critics argue that their moderation is too reactive, and they need to implement proactive safety measures to stop these apps from being published in the first place.

What are nonconsensual deepfake regulations?

These are emerging laws and legislative efforts (like those currently being pursued by the UK and US governments) aimed at making the creation, distribution, and hosting of nonconsensual explicit AI deepfakes a severe criminal offense.

How can I protect my family from AI deepfake image editors?

Ensure strict parental controls are active on your child’s devices to monitor app downloads. Educate your children about the dangers of sharing personal photos online, and encourage them to report any instances of digital harassment immediately.

Disclaimer: This article is for informational purposes only. The statistics and reports referenced regarding app moderation and the Tech Transparency Project reflect the data available as of early 2026. The landscape of AI technology and platform guidelines is subject to rapid change. Always refer to official app store policies for the most current safety guidelines.